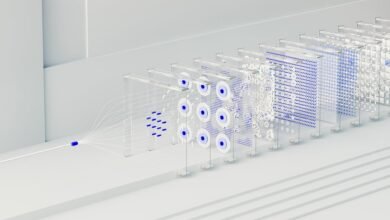

Neural Flow 3202560223 Apex Node

The Neural Flow 3202560223 Apex Node presents a cohesive bridge between on-device inference and efficient data/model management for edge AI. It emphasizes adaptive quantization, modular accelerators, and fidelity preservation without sacrificing latency. The design integrates security, governance, and clear interface contracts to support scalable deployments. Real-world playbooks enable reproducible configurations and continuous monitoring. The balance of speed, power, and accuracy invites examination of integration challenges and governance considerations that emerge in practice.

What the Neural Flow Apex Node Delivers for Edge AI

The Neural Flow Apex Node delivers a streamlined hardware-software bridge that accelerates edge AI workloads by combining on-device inference with efficient data and model management. It emphasizes edge fidelity and latency optimization, presenting a rigorous assessment of throughput, reliability, and modular interoperability.

The approach remains collaborative, analytical, and purposeful, aligning technical clarity with a freedom-minded perspective on scalable, autonomous edge deployments.

How Apex Node Balances Speed, Power, and Accuracy

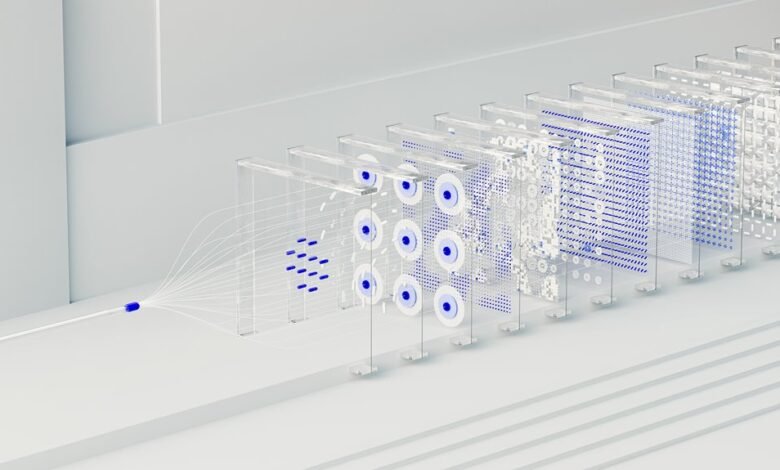

Apex Node negotiates speed, power, and accuracy through a disciplined balance of on-device inference, adaptive quantization, and modular accelerator resources, ensuring low latency without compromising model fidelity.

The design reveals deliberate speed tradeoffs and targeted accuracy tuning, aligning hardware constraints with algorithmic strategies.

Collaboration across components yields predictable performance, transparent metrics, and scalable power management—supporting freedom to deploy at varying resource budgets.

Deploying the Apex Node: Integration, Security, and Reliability

Ensuring practical deployment requires a cohesive integration strategy that aligns hardware capabilities with system-wide security and reliability objectives. The analysis emphasizes modular deployment, rigorous interface contracts, and transparent data lineage to support auditable governance. A collaborative framework coordinates developers, operators, and security teams, refining a deployment strategy that balances flexibility with robust controls, while prioritizing reliability and measurable risk reduction.

Real-World Use Cases and Deployment Playbooks for Neural Flow Apex Node

Real-world use cases for the Neural Flow Apex Node illustrate how modular analytics, edge-to-core orchestration, and secure data pipelines converge to support mission-critical workloads.

Deployment playbooks emphasize reproducible configurations, continuous monitoring, and risk-aware rollouts.

Key considerations include edge latency management and model compression techniques, balancing performance with resource limits while sustaining governance, collaboration, and rapid iteration across cross-functional teams.

Conclusion

In a landscape of gleaming accelerators and airtight governance, the Neural Flow Apex Node quietly proves the obvious: speed, power, and accuracy can coexist—ironically, only when disciplined collaboration replaces bravado. Its modular design, auditable security, and real-time management demonstrate that edge AI fidelity need not trade off latency. The system’s practicality outpaces its swagger, turning ambitious promises into reproducible reality. Ironically, the most disruptive feature may be restraint.